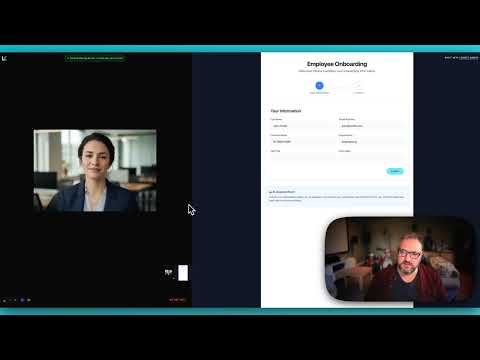

Demo

See the Anam + LiveKit integration in action with our onboarding assistant demo:View Demo Source Code

Full source code for the onboarding assistant demo with Gemini vision and screen share analysis.

Installation

Quick Start

The simplest way to add an Anam avatar to your LiveKit agent:Architecture

The Anam LiveKit plugin acts as a visual layer for your AI agent:Configuration

Environment Variables

Get your API credentials

You’ll need credentials from at least three services:

| Service | Where to get it |

|---|---|

| Anam | Anam Dashboard |

| LiveKit | LiveKit Cloud or self-hosted |

| Other Providers | DeepGram, ElevenLabs, OpenAI, Google AI Studio, etc. |

PersonaConfig Options

Configure your avatar’s identity:Choosing an Avatar

Stock Avatars

Browse ready-to-use avatars in our gallery. Copy the avatar ID directly into your config.

Custom Avatars

Create your own personalized avatar in Anam Lab with custom appearance and style.

Advanced Examples

Using Gemini with Vision

This example shows how to use Gemini Live for multimodal conversations with screen share analysis:Adding Function Tools

Extend your agent with custom tools that can take actions:Running Your Agent

- Development

- Production

Use the LiveKit CLI for local development:This connects to your LiveKit server and automatically joins rooms when participants connect.

Use Cases

The Anam + LiveKit combination is ideal for scenarios requiring voice interaction with visual presence:Employee Onboarding

Employee Onboarding

Guide new hires through forms and processes with screen share analysis. The AI sees what they see and provides contextual help.

Educational Tutoring

Educational Tutoring

Help students with homework by seeing their work. The avatar can point out errors and explain concepts visually.

Technical Support

Technical Support

See customer screens and provide step-by-step guidance with a friendly visual presence.

Healthcare Intake

Healthcare Intake

Assist patients filling out medical forms with a calm, reassuring avatar presence.

Financial Services

Financial Services

Guide users through account opening, KYC processes, and complex financial forms.

Troubleshooting

Agent won't connect to LiveKit

Agent won't connect to LiveKit

- Verify

LIVEKIT_URL,LIVEKIT_API_KEY, andLIVEKIT_API_SECRETare correct - Check that your LiveKit server is accessible

- Ensure WebSocket connections aren’t blocked by a firewall

- Test connectivity at meet.livekit.io

Avatar not appearing

Avatar not appearing

- Verify your

ANAM_API_KEYis valid - Check that

ANAM_AVATAR_IDmatches an existing avatar - Review agent logs for Anam connection errors

- Ensure the avatar session starts before the agent session

No voice response

No voice response

- Check your LLM API key is valid (OpenAI, Gemini, etc.)

- Verify microphone permissions in the browser

- Look for API errors in the agent logs

- Confirm the agent is receiving audio tracks

Screen share not detected

Screen share not detected

High latency or choppy audio

High latency or choppy audio

- Check your network connection stability

- Consider using LiveKit Cloud for optimized routing

- Reduce video sampling frequency if CPU-bound

- Monitor your LLM API response times

API Reference

AvatarSession

The main class for integrating Anam avatars with LiveKit agents.Configuration for the avatar’s identity and appearance.

Anam API endpoint. Override for staging or self-hosted deployments.

PersonaConfig

Display name for the avatar. Used in logs and debugging.

UUID of the avatar to use. Get this from the Avatar Gallery or Anam Lab.

Methods

start()

Starts the avatar session and connects it to the LiveKit room.The LiveKit agent session to connect the avatar to.

The LiveKit room instance from the job context.

Resources

Cookbook: Getting Started with LiveKit

Build a LiveKit voice agent with an Anam avatar from scratch

Cookbook: Gemini Vision + Screensharing

Add Gemini Vision to a LiveKit agent for screen share analysis

LiveKit Documentation

Official LiveKit docs for real-time communication

Avatar Gallery

Browse available stock avatars